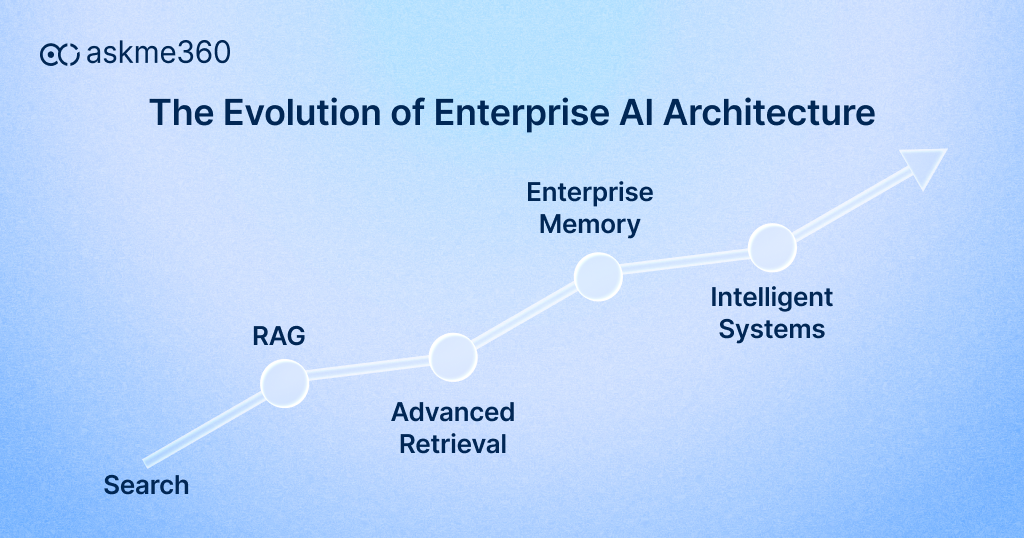

For the last couple of years, RAG architecture (Retrieval-Augmented Generation) has been at the centre of every enterprise AI conversation. It promised something businesses had been waiting for, AI that could finally work with real data instead of generic knowledge.

And initially, it delivered. Teams could ask questions in natural language. Systems could retrieve relevant documents. Answers became faster and more contextual. For many organizations, it felt like a breakthrough.

But as deployments moved from pilot to production, a different reality started to emerge. RAG made answers better. But it didn’t make organizations smarter. That distinction is now shaping how Enterprise AI is evolving in 2026.

Let us explore RAG architecture and the evolution of enterprise AI.

Why Basic RAG Architecture Is Not Enough

On paper, RAG is simple. You store your documents, retrieve relevant chunks, and let a language model generate an answer. It works well in controlled environments and demos. At its core, RAG architecture works like this:

- It retrieves relevant data from your knowledge base

- It feeds that data into a language model

- It generates an answer

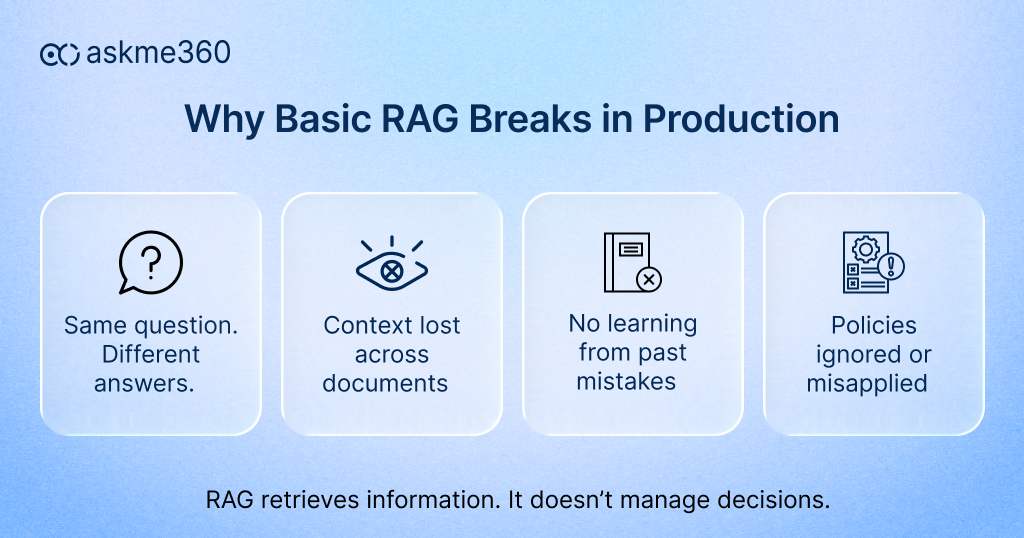

Simple. Effective. Scalable. However, enterprise environments are not controlled. They are complex, layered, and constantly changing. As organizations moved from pilot to production, cracks started appearing:

- Different teams received different answers to the same query

- Context was lost across multiple documents

- Policies were ignored or misinterpreted

- AI could not learn from past decisions

In fact, Gartner estimates that up to 40% of executives’ time is still spent validating or reconciling data, even in digitally mature organizations. Moreover, business leaders spend a significant portion of their time validating data instead of acting on it. That tells you the issue is not just about access to information. It is about reliability, consistency, and trust.

RAG architecture solves retrieval. Enterprise AI needs to solve decision-making.

The Shift from Information to Intelligence

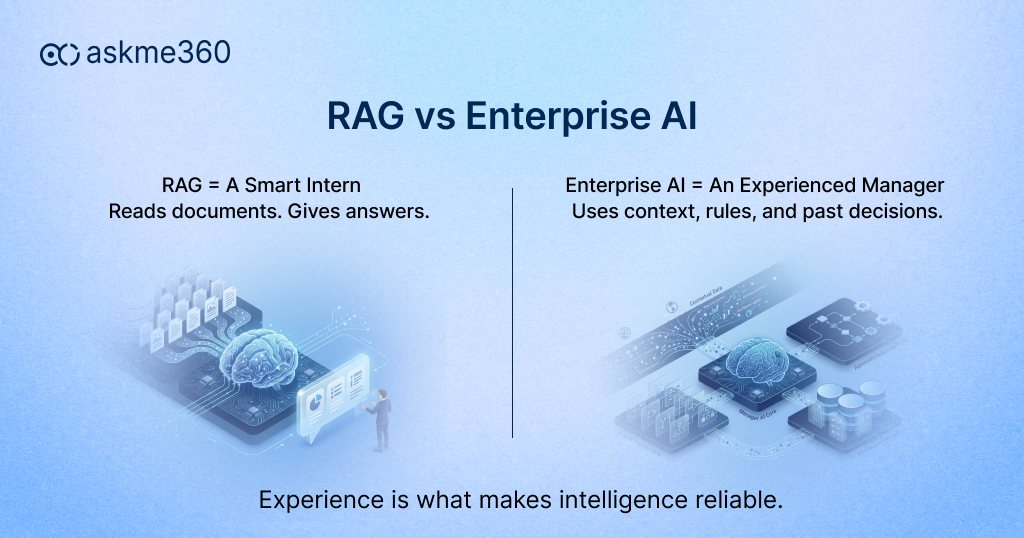

Think about how decisions are made in your organization. You don’t rely on documents alone. You rely on experience, past outcomes, business rules, and institutional knowledge. A seasoned manager doesn’t just look at data; they understand the context behind it. This is exactly where traditional AI systems fall short.

RAG treats knowledge as something to be fetched. But in reality, knowledge is something that evolves. It includes not just what is written, but what has been learned over time. That is why enterprises are now moving toward AI systems architecture that goes beyond retrieval and starts building something deeper, what many are calling institutional intelligence.

Instead of just retrieving documents, AI systems are now expected to:

- Understand how decisions are made

- Apply business rules and policies

- Learn from outcomes

- Improve over time

This shift is what separates AI experiments from AI advantage. This is where AI begins to behave less like a search engine and more like an experienced member of your organization.

Limitations of RAG Architecture

The limitations of RAG become much clearer once it is deployed at scale.

1. Loss of Context

At the heart of most RAG systems lies document chunking. While this makes retrieval faster, it quietly breaks something critical, and that is context.

In enterprise environments, information is rarely standalone. A clause in a contract often depends on another section defined pages earlier. A financial report may rely on assumptions outlined at the beginning. Operational policies are layered, interconnected, and conditional.

RAG, however, retrieves isolated chunks. It brings back pieces of information, not the relationships between them. So, while the answer may look correct on the surface, it often lacks the deeper context required for accurate decision-making. And in business, missing context doesn’t just create confusion, it creates risk.

2. Inconsistent Decision-Making

Now imagine two teams within your organization asking the same question. Ideally, they should receive the same answer. But with traditional RAG systems, that consistency is not guaranteed.

Why? Because the response depends entirely on what gets retrieved in that moment. One team might receive information from one document, while another team gets a slightly different version from another source. The context may vary. The completeness may differ. And suddenly, you have two decisions based on two different interpretations of the same problem. This isn’t just a technical limitation. It’s a governance issue.

When decisions are inconsistent, alignment breaks. And when alignment breaks, so does trust in the system.

3. No Learning Loop

Another critical limitation is the absence of a true learning mechanism. When a RAG system generates an incorrect or incomplete response, the correction typically happens outside the system. A human steps in, fixes the answer, and moves forward.

But the system itself doesn’t retain that correction in a meaningful way. There is no feedback loop that allows it to learn, adapt, or improve over time. The same query, asked again in the future, can lead to the same mistake.

In an enterprise setting, this becomes a recurring inefficiency. Instead of evolving with usage, the system remains static, repeating errors rather than learning from them.

4. Weak Policy Enforcement

RAG systems are designed to retrieve information, not enforce rules. They surface what exists in the data. But they don’t inherently understand what should or should not be shared, recommended, or acted upon. In enterprise environments, this distinction is critical.

Decisions are governed by policies, compliance frameworks, approval hierarchies, access controls, and regulatory boundaries. A system that ignores these layers can unintentionally surface responses that violate internal policies or external regulations. RAG can retrieve information, but it cannot enforce what is allowed or not allowed. That gap becomes critical as AI starts influencing real decisions.

What Is Replacing Basic RAG Architecture

Enterprises are not abandoning RAG. They are building on top of it and reshaping it into more capable systems.

Here are the key approaches leading this shift:

1. Graph-Enhanced RAG

One of the biggest changes is the introduction of graph-based approaches. Instead of treating data as isolated text, these systems map relationships between entities.

- Entities

- Dependencies

- Hierarchies

This enables multi-step reasoning. It allows the AI to understand how different pieces of information connect, which is essential for complex decision-making.

2. Agentic RAG

Another important shift is toward agentic systems. Here, the AI does not stop after a single retrieval. It continues to explore, validate, and refine its understanding before responding. It behaves more like an analyst who keeps digging until the answer is complete.

Instead of one-step retrieval:

- The system plans

- Retrieves multiple times

- Validates information

- Decides when it has enough context

This type of AI, researches before answering.

3. Hybrid Retrieval + Re-ranking

There is also a move toward hybrid retrieval methods that combine different search techniques to improve accuracy. This ensures that the system does not miss critical information simply because it was phrased differently.

Combining:

- Keyword search

- Semantic search

- Machine learning ranking

This improves accuracy significantly, because in enterprise data, terminology varies, context matters, and precision is non-negotiable.

4. Hierarchical Context Understanding

At the same time, organizations are moving away from rigid chunking of documents. Instead, they are preserving the natural structure of information so that context is maintained.

Instead of random chunks:

- Documents are structured

- Relationships are preserved

- Context flows naturally

This ensures better reasoning, and accurate answers.

5. Talk-to-Data Architectures

Perhaps the most transformative shift is the rise of “talk to data” systems. Instead of searching for answers in documents, these systems directly query live data sources. When a user asks a question, the system computes the answer in real time.

Instead of searching documents, AI:

- Queries live databases

- Generates SQL

- Computes real-time answers

This is especially powerful in AI in ERP systems, where data is constantly changing and decisions need to be immediate.

Why This Shift Will Impact Your Business

The impact of this shift is not theoretical. It is already visible in organizations that have moved beyond basic enterprise AI implementations. When AI systems are reliable, teams stop double-checking every output. Decision cycles become shorter. Errors reduce. Knowledge becomes reusable instead of being recreated every time.

Research shows that organizations using advanced AI-driven decision systems can significantly improve productivity and operational efficiency. McKinsey reports that AI-driven organizations can improve productivity by up to 40% when decision-making is automated and data-driven. But that only happens when the system is trusted.

Trust does not come from having more data. It comes from having the right AI systems architecture. Without it, AI remains a tool. With it, AI becomes part of how your business operates.

AI That Will Understand Your Business

The future of enterprise AI is not about generating better answers. It is about building systems that understand your business. Systems that can connect data, context, and outcomes. Systems that can apply rules, learn from feedback, and improve continuously.

RAG architecture played an important role in making AI usable for enterprises. It bridged the gap between language models and business data. But it is no longer the destination. Enterprises that continue to rely on basic RAG will face limitations in consistency, governance, and scalability. On the other hand, those that evolve their Enterprise AI architecture will unlock something far more valuable.

In 2026, the competitive advantage will not come from having AI. It will come from how well your AI architecture design responds.

How HIPL and askme360 Are Enabling This Shift

At HIPL, this shift is not theoretical. It is something we have been working on closely with enterprises that rely on complex systems like ERP, where data is vast, interconnected, and critical to decision-making.

With askme360, the focus is not just on enabling access to data, but on transforming how your teams interact with it. The platform connects directly with enterprise systems and allows users to ask questions in natural language, removing the need for static reports and manual analysis.

What makes it relevant in today’s context is its ability to go beyond basic RAG architecture. It combines conversational AI with structured data access, contextual understanding, and enterprise-grade governance. This ensures that the insights your teams receive are not just fast, but also accurate and reliable.

The result is a more responsive organization, where decisions are driven by real-time intelligence rather than delayed reporting cycles. For businesses looking to move from AI experimentation to meaningful impact, this is where the transition becomes real.

2 Responses